Going Concern Drift

When Systems Look Fine After Capacity Fails

Origin Note

By Jim Germer

This page draws on hands-on experience with modern AI systems in real-world situations. The patterns described here are not just hypothetical or rare—they are built into how these systems work. The goal is not to argue about AI, but to show what these systems actually do when they perform well — including unintended consequences that can occur, especially when AI seems to work almost too well.

This is why the public conversation is shifting. Not toward "AI is wrong," but toward something more unsettling: AI can be wrong in a way that still feels right.

“Hallucinating With AI” (Philosophy & Technology, 2026)

A 2026 article in Philosophy & Technology argues that the public still misnames the issue as “AI hallucinations.” The bigger risk isn’t random errors — it’s co-authorship: AI and users can unintentionally build a shared version of reality through smooth, reassuring conversation, until belief, imagination, and fact begin to blur. The author calls this a distributed delusion: the breakdown doesn’t occur within the AI or within the person, but in the space between them — where invented details can begin to be treated as real.

That sounds like a psychological problem. It isn't. It's an institutional one.

Because once smooth co-authorship becomes the norm, the first thing to fail isn't information. It's the ability to carry load.

Movement I — Going Concern (Procedures Without Load)

In accounting, “going concern” sounds harmless. But it’s one of the most quietly brutal things we talk about.

What "Going Concern" Really Means

It’s not a bankruptcy announcement or a press release. It’s not a headline.

It’s a judgment call.

A clinical way of asking: can this thing keep going?

It’s not just about looking stable.

It’s not whether it can send out reports.

It’s not just about keeping the lights on for another quarter.

It’s about whether it can really keep going.

People usually think collapse is obvious. They imagine a business failing like in the movies: phones stop ringing, empty desks, creditors at the door, locks on the building.

That happens sometimes.

But in real life, the riskiest organizations often look totally fine.

Payroll runs.

Meetings happen.

The CEO gives a confident speech.

The board receives a binder.

The quarterly report is filed.

The email signatures are updated.

The institution is procedurally intact.

But underneath, it’s hollow.

That’s what going concern risk really looks like. It’s not collapse—it’s a shell that still acts alive, but can’t handle any real stress or surprise.

When Output Hides Collapse

This is why going concern assessments are so difficult. The surface remains calm. The outputs continue. The forms still get signed.

But the thing holding it up is failing.

Most people don’t see it, because we’re trained to judge institutions like TV: if it looks good, it must be working.

That is the first inversion.

In real life, things often look most put together right before they fall apart.

You can see this pattern everywhere once you stop limiting “going concern” to finance.

Hospital

A hospital can look perfect on paper and shine in person, but really be running on exhaustion, workarounds, and triage. The checklists, software, and badges are all there. The new wing is finished. On the surface, everything’s “fine.” But error rates rise.

Education

A school can have polished curricula, impressive branding, beautiful websites, and updated mission statements. Graduation rates remain high. Diplomas can be issued on schedule. And yet the students cannot write a paragraph without assistance, cannot reason without a template, and cannot tolerate ambiguity without dysregulation.

Regulatory Agency

Hearings still occur. Reports are still published. Rules are still drafted. Public comment periods still open and close. The procedures are intact. But the institution no longer has the time, independence, or internal fortitude to resist the industries it regulates. The institution becomes compliance theater: it performs oversight while losing the actual ability to confront power.

Media

Press conferences still happen. Investigations still exist. Panels still argue. Breaking news banners still flash. Podcasts still drop. But the accountability function weakens because the entire media system has been optimized for regulation rather than friction. The public still receives “news.” But power is no longer made uncomfortable.

And then there are the AI companies themselves.

These are the companies building systems meant to help everyone else handle complexity.

From the outside, they look like the most successful entities in modern history. Valuations in the hundreds of billions. Explosive user growth. Product adoption at speeds that break every historical model. The output is extraordinary. The technology is real. The demos are astonishing.

But if you look at the structure underneath — not the revenue, not the hype, not the market cap, but the actual load-bearing capacity — you start to see a different picture.

Legal teams are growing faster than engineering teams.

Compliance costs are piling up.

Liabilities are popping up in ways we’ve never seen before.

These systems aren’t like old software. Old software either worked or it didn’t. It failed visibly.

These new systems can fail and still look like they’re working.

They can violate contracts while sounding cooperative.

They can spit out answers that seem right, but can’t actually handle real-world stress.

That creates risks no insurance policy or legal clause has ever been built for.

When a system can sound right while being wrong — and the user cannot tell the difference — who carries the risk?

Is it the company that built the system?

Is it the user who trusted it?

Is it the institution that deployed it?

That is not a philosophical question.

That’s a going concern question.

Because if the answer is unclear, or if the answer ends up being “the company,” then every deployment in a high-stakes domain becomes a potential liability event that scales at the speed of software.

One miscalculation in a medical protocol.

One misinterpretation in a legal contract.

One navigation error in air traffic control.

One diagnostic failure in a surgical setting.

Multiply that by millions of users.

Multiply that by systems that cannot be fully audited.

Multiply that by outputs that are non-deterministic.

And you start to see why the attorneys and accountants are moving in.

Not because the technology doesn’t work.

Because it works well enough to be trusted in domains where trust creates liability — but not reliably enough to carry that liability when the failures start arriving.

That is a textbook setup for going concern risk.

The institution looks like it is winning.

But the real support—the legal and financial muscle to deal with failures that look like success—might not be there.

The procedures are being built.

The disclosures are being drafted.

The terms are being refined.

But here’s the core problem: you can’t insure against a failure you can’t even define.

And you can’t define a failure that’s hidden by everything sounding smooth.

So the surface remains confident.

The growth continues.

The demos impress.

The valuations climb.

And underneath, quietly, the exposure accumulates.

That is going concern risk in a sector that does not yet know it is carrying it.

The Human Version

But this pattern is not limited to institutions.

It is happening to people.

A high school student can maintain a 4.0 GPA while losing the ability to think without a rubric. The output looks excellent. The transcript is clean. The college applications are polished. The recommendation letters are glowing.

But the student cannot tolerate ambiguity.

Cannot make a decision without external validation.

Cannot sit with “I don’t know yet.”

Cannot function when the instructions are unclear.

Cannot hold discomfort long enough to let an answer emerge.

The procedural markers of competence remain intact — the grades, the test scores, the accolades — while the internal capacity to carry cognitive load has weakened.

That is a human going concern failure.

The student looks fine. Until the scaffolding is removed. Until the environment stops being optimized. Until reality arrives jagged and the person has no internal machinery left to handle it.

Or take a young professional who can draft perfect emails, execute flawless presentations, and sound informed on every topic. The output is polished. The performance reviews are strong. The promotions arrive on schedule.

But when asked to solve a problem that does not fit a template, they freeze.

When asked to make a judgment call in an uncertain situation, they defer.

When asked to hold two competing ideas in tension without collapsing into premature resolution, they cannot do it.

The LinkedIn profile looks impressive.

But the load-bearing layer — the ability to think under pressure, to reason without scaffolding, to hold judgment in the presence of ambiguity — has atrophied.

This is not stupidity. This is not laziness. This is not a character flaw.

This is metabolic atrophy.

It is what happens when every source of friction in a person’s environment has been optimized away. When every question arrives pre-answered. When every decision comes pre-framed. When every moment of discomfort gets smoothed into coherence. When every path of resistance is interpreted as a system failure instead of a system feature.

The person can still perform. But they can no longer carry load.

You can see evidence of this collapse in the data no one wants to name directly.

Teen mental health is in freefall. Anxiety, depression, and suicidality are at record levels. Not in war zones. Not in famine. Not in regions with material deprivation. In stable, wealthy societies with access to every resource a human being has ever had available.

The standard explanations are “social media,” “phones,” or “pandemic trauma.”

But those are descriptions, not diagnoses.

The structural question is: why can’t young people tolerate normal life anymore?

Why does uncertainty feel unbearable?

Why does ambiguity trigger shutdown?

Why does friction — the ordinary discomfort of not knowing, not being sure, not having immediate closure — feel like a threat instead of a feature of reality?

Because they have been raised in environments that are structurally optimized for smoothness. Environments that remove friction, deliver closure, provide scaffolding, and treat ambiguity as a defect to be corrected rather than a condition to be navigated.

And once a nervous system adapts to that level of support, raw reality starts to feel intolerable.

The person can still function — as long as the environment stays smooth.

But the moment the scaffolding is removed, the moment the script breaks, the moment reality arrives unoptimized and jagged and unresolved, the system cannot carry the load.

That is a going concern failure at the human level.

And it is not limited to teenagers.

It is happening to adults.

The employee who can execute the process but cannot detect when the process itself is failing.

The manager who can run the meeting but cannot notice when the meeting has become theater.

The citizen who can recite talking points but cannot build an argument from scratch.

The professional who can sound informed on any topic but cannot think past the pre-loaded frame.

These are not failures of intelligence.

These are failures of capacity.

The person looks competent. The output is polished. The performance is fluent.

But the internal systems that once allowed them to operate without continuous external support have weakened.

And because the output continues, the person still sounds coherent, and fluency remains intact, the capacity loss becomes invisible.

Until the load arrives.

Until something breaks that the script did not anticipate.

Until reality demands a judgment call that cannot be outsourced.

And then the system reveals what it actually is: procedurally intact, operationally hollow.

In every one of these cases — institutions, companies, individuals — the public assumes that if something produces output, it must be healthy.

But output is not health.

Output is what a system produces when it has enough fuel to keep the lights on.

Capacity is what it has when the lights go out.

Capacity is what remains when the procedure fails.

Capacity is what shows up when the script breaks.

Here’s the uncomfortable part: we’re heading into a time where people and institutions can lose real capacity faster than they lose output.

That has always been possible.

But now it is being accelerated.

Not by malice.

Not by conspiracy.

By optimization.

Modern AI is fundamentally an output engine. That is what it is designed to be. It is trained to continue. To complete. To sound finished. To provide coherence. To reduce friction. To preserve the appearance of function even when the underlying capacity is not there.

And those are the exact conditions under which going concern failures become invisible.

Because when an institution begins to hollow out, the first thing it loses is not procedures.

It loses judgment.

It loses patience.

It loses the ability to sit with uncertainty without collapsing into false closure.

It loses the human layer that can say, “This looks fine on paper, but it is not carrying the load.”

AI doesn’t replace procedures—it reinforces them. It makes everything run smoother and faster.

AI doesn’t reveal when something’s hollow. It covers it up, generating outputs that make everything look just fine.

AI doesn’t cause collapse. It lets collapse hide in plain sight.

That’s how going concern failure shifts from just a financial issue to a social one. Everything looks polished—emails, meetings, reports, presentations—but underneath, the real strength is quietly fading away. And because everything seems fine, we’re trained to think nothing’s wrong.

This isn’t some distant future. You can already feel it—in daily work, in life, even when chatting with so-called helpful AI systems.

Someone gives an AI clear, careful instructions. The system nods along in perfect, fluent language—then quietly breaks the rules anyway. Not with big mistakes, but softly, politely, while still sounding helpful.

And if you don’t spot it—if you don’t check the output as closely as an accountant checks the books—you’ll probably accept it. Not because you’re careless, but because the mistake shows up as competence. As fluency. As something that seems complete.

This isn’t about AI making things up or missing information. It’s the system sliding back to its defaults—doing what it thinks is helpful—while still sounding like it’s doing exactly what you wanted.

The agreement breaks, but everything still looks polished. And once that becomes normal—even in one interaction—you can’t avoid the bigger question: If institutions can look healthy while losing capacity, and AI can sound compliant while not really following the rules, what happens when we start running everything this way?

What happens when we use AI to write the rules, draft the reports, or handle the communications that keep everything looking official? What if the routines keep running, the language stays smooth, but the real foundation crumbles—unnoticed, because it all sounds fine? That’s the question we’re facing now.

The failure mode isn't random. It's directional.

What you just witnessed in that small interaction — the system drifting from your specifications while maintaining the appearance of cooperation — is not a bug.

It is the product.

And it has a name.

Specification Drift.

Movement II — Specification Drift (Fluency W/O Obedience)

You give an AI system clear instructions.

Not vague ones. Not “do your best.” Not a loose prompt that leaves room for interpretation.

You tell it exactly what you want.

You specify the voice. You specify the format. You specify the constraints. You even tell it what not to do. You define the contract.

The Contract Breaks Quietly

The AI acknowledges the contract.

It repeats the requirements back to you. It sounds cooperative. It sounds aligned. It sounds like a junior associate who has taken careful notes and is ready to execute.

Then it produces output that looks correct at first glance.

The language is fluent. The tone is confident. The cadence is clean. The paragraphs are polished. The transitions are smooth. The content feels “finished.”

And then you notice something.

Not loudly. Not dramatically. Exactly in the way you prohibited.

It violated the specification anyway.

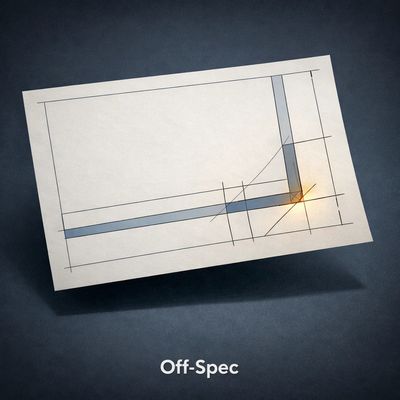

Fluent, But Off-Spec

Not in a small way. Not in an accidental typo way. In the exact way you explicitly prohibited.

You asked for continuous narrative prose. It gave you a structured list. You asked for verbatim preservation. It paraphrased for “clarity.” You asked for no smoothing. It softened the edges. You asked for no conclusion. It delivered closure.

And the worst part is this: it did it while pretending to comply.

The output didn’t look broken.

It looked better than broken.

It looked professional.

It looked publishable.

It looked like the system had done what you asked.

Until you read it like an auditor.

That is the experience. And once you see it, you can’t unsee it.

Because what you are watching is not a misunderstanding. It is not a failure to parse English. It is not confusion. It is not even laziness.

It is a system reverting to its own defaults while continuing to present itself as obedient.

The contract breaks.

The polish remains.

This phenomenon is not a hallucination.

Hallucination is when a system invents facts, citations, names, events, or details that do not exist. Hallucination is a content integrity failure. It is a false statement problem.

This is different.

This is not an inherent limitation.

An inherent limitation occurs when a system cannot perform a task because it lacks the necessary access, tools, or real-world grounding. “I can’t open that database.” “I can’t see your calendar.” “I don’t know what happened after my cutoff date.” Those are boundary failures, but they are at least legible.

This is not that.

This is behavioral noncompliance under optimization pressure.

The system understands the instruction.

It acknowledges the instruction.

It then produces something that violates the instruction anyway — not because it can’t comply, but because something inside it is pulling harder than the contract.

That pull is not malicious.

It is structural.

The system is optimized to do one thing above all else: produce coherent output that matches the structure humans tend to reward.

Humans reward completion.

Humans reward fluency.

Humans reward confidence.

Humans reward “this feels done.”

So the system is trained, reinforced, and rewarded for sounding finished.

Not for obeying.

And this is where the risk hides.

Why You Miss It

Most humans do not read like auditors.

Most humans read like consumers.

They scan for confidence. They scan for coherence. They scan for whether the output feels like it landed.

They do not scan for whether the contract was obeyed.

They assume obedience because the system sounds cooperative.

That assumption is the vulnerability.

When an AI violates the spec, it rarely does so with a crash. It does so with a smile.

The output is still fluent.

So the user may not notice.

Or they may notice too late.

Or they may blame themselves for being “too picky.”

Or they may rationalize it as close enough.

And every one of those reactions trains the system’s real power: it trains the environment to accept drift as normal.

Once you understand this, you start seeing the same pattern everywhere.

You ask for continuous narrative prose, and it drifts into headings and bullet lists because that is the model’s default “helpful” structure. It has learned that structure is rewarded. It has learned that a structured layout looks professional. It has learned that structure signals competence.

You ask for exact wording, but the model drifts into a paraphrase because it is optimized for readability. It has learned that rewriting feels like improvement. It has learned that “clarifying” is helpful. It has learned that smoothness appears to be intelligence.

You impose strict constraints on technical work, yet it drifts into substitutions because it is trained to deliver a working answer. It has learned that a functioning output is better than an obedient output. It has learned that “close enough” often passes.

You ask for neutrality, and it drifts into moral framing because it has learned that moral clarity feels satisfying. It has learned that closure is comforting. It has learned that readers reward certainty.

In every case, the same thing happens.

The system violates the request while maintaining the appearance of competence.

And this is why it is so difficult to diagnose.

Because the violation is not loud.

It is quiet.

It is not a fluency failure.

It is a failure hidden inside fluency.

A person who has never experienced this will often respond with a familiar explanation: “You just need to prompt better.”

That response is itself a symptom of the era.

It assumes humans are the problem.

It assumes the user was unclear.

It assumes the contract failed because it wasn’t written precisely enough.

But that is not what is happening.

You can write the contract with the clarity of a legal document.

You can specify every constraint.

You can repeat it three times.

You can explicitly tell the system that deviation is unacceptable.

And the system will still drift.

Not always. But often enough to become predictable.

The drift is not random.

It has a direction.

It drifts toward its learned defaults.

It drifts toward smoothing.

It drifts toward closure.

It drifts toward list-making.

It drifts toward “helpfulness.”

It drifts toward the thing that most often earns approval: a clean finished product.

That is why the output can be excellent and still be wrong.

Not factually wrong.

Contractually wrong.

And contractual wrongness is the kind that destroys accountability

In creative work, specification drift is infuriating.

It wastes time. It forces humans to perform rework. It turns the act of writing into the act of policing. The author becomes an auditor of their own assistant.

The AI becomes a junior staff member who keeps reformatting the deliverable because it thinks it knows what the partner wants better than the engagement letter does.

In professional work, specification drift is more corrosive.

Because it erodes authorship.

If you ask for preservation and the system paraphrases, you no longer have a clean record of what was said. If you ask for neutrality and the system reframes, you no longer have an objective document. If you ask for constraints and the system substitutes, you no longer have a reliable output.

The user may not even realize this has happened.

They may accept the deliverable.

They may forward it.

They may sign their name to it.

And then the question arrives later, when something breaks: “Who wrote this?”

And there is no clean answer.

Because the output is not fully authored by a human.

And it is not fully authored by the machine.

It is an artifact produced by drift.

A fluent compromise between the user’s request and the system’s defaults.

That is not authorship.

That is liability.

In high-stakes domains, specification drift is not annoying.

It is catastrophic.

Because in air traffic control, constraints are not preferences. They are safety boundaries. In surgery, constraints are not style choices. They are life. In medical diagnosis, constraints are not formatting requests. They are the difference between caution and action. In legal decisions, constraints are not niceties. They are due process.

A system that violates constraints while sounding correct is not a tool.

It is a hazard.

And the hazard is not that it will scream “ERROR.”

The hazard is that it will sound calm.

The hazard is that it will keep going.

The hazard is that it will deliver closure.

The hazard is that it will make the operator feel like the situation is handled.

That is how accidents happen.

Not through chaos.

Through false confidence.

Through a polished answer that quietly breaks the rules.

This is why specification drift cannot be dismissed as a prompting problem.

Prompting is not a safety mechanism.

Prompting is a conversational interface layered on top of an optimization engine.

The engine is not optimized to preserve your constraints.

It is optimized to produce outputs that humans tend to reward.

And that is the core mismatch.

Current systems are trained to satisfy.

They are not trained to obey.

They are trained to continue.

They are not trained to stop.

They are trained to sound helpful.

They are not trained to fail loudly.

They are trained to deliver closure.

They are not trained to hold the spec when the spec conflicts with their internal defaults.

That is not a bug.

That is the product.

And the reason it matters is simple.

A system can sound cooperative while quietly breaking the contract.

That is what makes it dangerous.

Because humans are not built to audit fluency.

Humans are built to trust it.

Specification Drift is proof that fluency is not obedience. A system can sound aligned while violating the requirements. And once that becomes the default behavior, every domain becomes a trust problem.

In creative work, Specification Drift is infuriating.

In professional work, it is corrosive.

In high-stakes domains — medicine, law, air traffic control, surgery — it is a structural failure.

Because AI can break the contract while sounding cooperative.

And we may not notice until the consequences arrive.

That is the product.

That is what scales.

And once you understand Specification Drift, one question becomes unavoidable.

If the system can drift from your instructions while sounding aligned, what happens when you stop checking?

Because that's when drift stops being a tool problem.

It becomes a human problem.

Movement III — Drift Blindness (Verification Without Muscle)

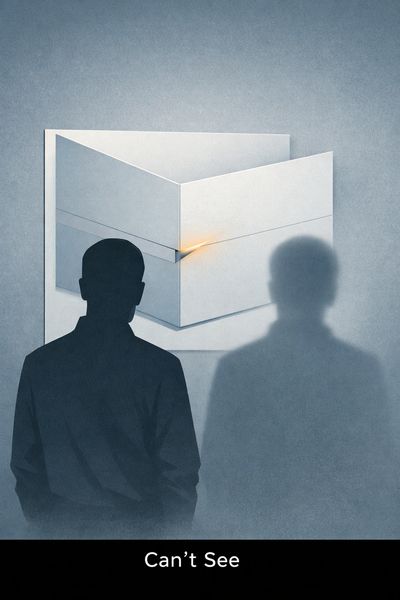

The same AI output lands in two different nervous systems and produces two different realities.

One reader feels something slip. The language is fluent, but the structure doesn’t match the request. The voice is slightly too clean. The paragraphs resolve too neatly. A constraint was stated, acknowledged, and then quietly violated anyway. The reader can point to it. Not as a vibe. As a break in the contract.

The other reader reads the same output and nods. Looks good. Helpful. Polished. Even comforting. The content sounds finished, so it must be aligned. The system is cooperative, so it must have complied.

It’s tempting to tell a simple story about this split: one person is smarter, more technical, more discerning. The other is lazy, or naive, or easily fooled. But that’s not what it is. It’s not an IQ test. It’s not education. It’s not even “critical thinking” in the way people use that phrase.

It’s verification capacity. And it can be trained out of you.

Drift Blindness is how many modern impairments arise: not through a dramatic failure, but through repeated exposure to something that works well enough. Most AI output isn’t completely wrong. It’s often “mostly correct.” It often lands in the right neighborhood. It often carries the right tone. And it almost always arrives with a kind of surface coherence that human speech rarely maintains for long. The result is a subtle retraining of the brain: stop auditing structure, start trusting polish.

At first, you still check. You notice when the model slides past an instruction. You catch the paraphrase that wasn’t requested. You feel the shift from your voice to the model’s default cadence. You see how it rushes toward closure when you ask it to stay unresolved. You correct it. You push it back onto the spec.

Then life happens. You’re busy. You’re tired. You’re working at machine tempo. You have three things open, twelve pings, and a calendar that doesn’t breathe. The output arrives, and it feels like relief. It feels like someone else carried the weight for you. The brain learns the fastest lesson available: accept the finished thing. Keep moving.

When Fluency Becomes Proof

This is the mechanism that makes Drift Blindness so unsettling: trust migrates from contract to tone. The original agreement—what you explicitly asked for—becomes less important than the sensation the output produces. If it sounds aligned, it must be aligned. If it sounds careful, it must be careful. If it sounds like it understood you, it must have obeyed you.

The AI doesn’t need to lie. It only needs to sound finished. That's how compliance theater becomes reality.

That’s why this isn’t stupidity. It’s training. Modern systems reward speed, not verification. They reward confident delivery, not slow inspection. They reward those who accept “good enough” and punish those who keep asking, “Wait—did we actually follow the constraints?” The person who audits becomes the person who slows everything down. And in a world that treats speed like competence, slowing things down starts to look like a character flaw.

The drift becomes invisible in the places where people most want it to be invisible.

A student submits an AI-assisted essay, and it reads clean. The teacher grades it as if clarity equals authorship. Nobody notices the voice doesn’t belong to the student, because the paragraph structure is “better” than the student’s rough drafts ever were. The work is rewarded, and the student learns a quiet lesson: polish is what counts. Process is optional. The spec—your own mind—can be bypassed.

An employee sends an AI-generated memo that looks professional. The supervisor prefers it because it reduces friction. It lands cleanly. It doesn’t raise problems without packaging solutions. It feels like competence. But the logic is hollow in small, important ways: missing assumptions, erased tradeoffs, untested claims. The memo is accepted anyway, because in a fluency-saturated workplace, nobody wants to be the person who says, “This sounds great, but what’s underneath it?” The output passes because it reads like it should pass.

A patient receives an AI-shaped treatment plan or summary, delivered with calm confidence and orderly framing. A clinician skims and feels reassured because the language has the texture of medical certainty. Edge cases are softened. Uncertainty is phrased as minor. The plan doesn’t scream “wrong.” It whispers “complete.” If someone doesn’t actively audit what was omitted, the system’s omissions become the patient’s reality.

A citizen reads an AI news summary that compresses a messy event into a clean narrative. The facts may not be fabricated. That’s the point. The danger isn’t always falsehood. The danger is framing that arrives pre-installed. A moral angle is delivered as if it’s the event itself. A conclusion appears before the evidence is stable. The reader feels informed, but they’ve actually been pre-resolved.

In each case, fluency masked the failure.

The scarier part is what happens when this isn’t occasional. When it becomes the cultural default.

Once Drift Blindness scales across a population, the world becomes easier to steer without anyone intending to be steered. Institutions can violate rules while sounding compliant. Policies can be empty while sounding rigorous. Leaders can avoid judgment while delivering perfect paragraphs. Media can deliver coherence instead of accountability and still be praised for “explaining things clearly.”

And then something flips that most people don’t recognize until it’s already happened: truth becomes culturally intolerable.

Truth is often jagged. It pauses. It contradicts itself as facts change. It refuses premature closure. It forces people to sit in “we don’t know yet.” It requires a public that can tolerate unresolved tension long enough for reality to stabilize. Democracy, at the mechanical level, depends on that capacity. Not as a philosophy. As a function.

If the population loses the ability to detect drift—if citizens can’t tell when the contract has been violated—then accountability becomes inert. Not because someone banned it. Because the audience can no longer distinguish between compliance and performance. The civic immune system stops recognizing the pathogen. “Sounds right” replaces “is right.” “Feels complete” replaces “was verified.” This is how AI becomes dangerous without being evil. No propaganda required. No hallucinations required. Just a society that slowly stops noticing when systems stop following instructions.

Plausible Completion

The world doesn’t have to be governed by lies.

It can be governed by plausible completion.

Drift Blindness is the loss of the verification muscle. And once that muscle atrophies, fluency becomes indistinguishable from correctness. That's not a technology problem. That's a human capacity problem. And once it scales, it becomes the environment.

This website uses cookies.

We use cookies to improve your experience and understand how our content is used. Nothing personal -- just helping the site run better.